One of the challenges of trying to get

people to improve their statistical inferences is access to good software.

After 32 years, SPSS still does not give a Cohen’s d effect size when researchers

perform a t-test. I’m a big fan of R nowadays, but I still remember when it I

thought R looked so complex I was convinced I was not smart enough to learn how

to use it. And therefore, I’ve always tried to make statistics accessible to a

larger, non-R using audience. I know there is a need for this – my paper on

effect sizes from 2013 will reach 600 citations this week, and the spreadsheet

that comes with the article is a big part of its success.

So when I wrote an article about thebenefits of equivalence testing for psychologists, I also made a spreadsheet.

But really, what we want is easy to use software that combines all the ways in

which you can improve your inferences. And in recent years, we see some great

SPSS alternatives that try to do just that, such as PSPP, JASP, and more recently, jamovi.

Jamovi is made by developers who used to

work on JASP, and you’ll see JASP and

jamovi look and feel very similar. I’d recommend downloading and installing

both these excellent free software packages. Where JASP aims to provide

Bayesian statistical methods in an accessible and user-friendly way (and you

can do all sorts of Bayesian analyses in JASP), the core aim of jamovi is wanting to make software that is ‘“community driven”, where anyone can

develop and publish analyses, and make them available to a wide audience’. This

means that if I develop statistical analyses, such as equivalence tests, I can

make these available through jamovi for anyone who wants to use these tests. I

think that’s really cool, and I’m super excited my equivalence testing package

TOSTER is now available as a jamovi module.

You can download the latest version of jamovi

here. The latest version at the time of writing is 0.7.0.2. Install, and

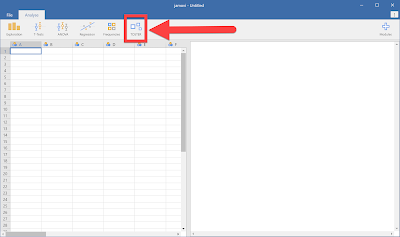

open the software. Then, install the TOSTER module. Click the + module button:

Install the TOSTER module:

And you should see a new menu option in the

task bar, called TOSTER:

To play around with some real data, let’s

download the data from Study 7 from Yap et al,

in press, from the Open Science Framework: https://osf.io/pzqj2/.

This study examines the effect of weather (good vs bad days) on mood and life

satisfaction. Like any researcher who takes science seriously, Yap, Wortman,

Anusic, Baker, Scherer, Donnellan, and Lucas made their data available with the

publication. After downloading the data, we need to replace the missing values

indicated with NA with “” in a text editor (CTRL H, find and replace), and then

we can read in the data in jamovi. If you want to follow along, you can also

directly download the jamovi file

here.

Then, we can just click the TOSTER menu,

select a TOST independent samples t-test, select ‘condition’ as condition, and

analyze for example the ‘lifeSat2’ variable, or life satisfaction. Then we need

to select an equivalence bound. For this DV we have data from approximately 117

people on good days, and 167 people on bad days. We need 136 participants in

each condition to have 90% power to reject effects of d = 0.4 or larger, so let’s

select d = 0.4 as an equivalence bound. I’m not saying smaller effects are not

practically relevant – they might very well be. But if the authors were

interested in smaller effects, they would have collected more data. So I’m

assuming here the authors thought an effect of d = 0.4 would be small enough to

make them reconsider the original effect by Schwarz & Clore (1983), which

was quite a bit larger with a d = 1.38.

In the screenshot above you see the analysis and

the results. By default, TOSTER uses Welch’s t-test, which is preferable over

Student’s t-test (as we explain in this recent article), but if

you want to reproduce the results in the original article, you can check the

‘Assume equal variances’ checkbox. To conclude equivalence in a two-sided test,

we need to be able to reject both equivalence bounds, and with p-values of

0.002 and < 0.001, we do. Thus, we can reject an effect larger than d = 0.4

or smaller than d = -0.4, and given these equivalence bounds, conclude the

effect is too small to be considered support for the presence of an effect that

is large enough, for our current purposes, to matter.

Jamovi runs on R, and it’s a great way to

start to explore R itself, because you can easily reproduce the analysis we

just did in R. To use equivalence tests with R, we can download the original

datafile (R will have no problems with NA as missing values), and read it into

R. Then, in the top right corner of

jamovi, click the … options window, and check the box ‘syntax mode’.

You’ll see the output window changing to

the input and output style of R. You can simply right-click the syntax on the

top, right-click, choose Syntax>Copy and then co to R, and paste the syntax

in R:

Running this code gives you exactly the

same results as jamovi.

I collaborated a lot with Jonathon Love on

getting the TOSTER package ready for jamovi. The team is incredibly helpful, so

if you have a nice statistics package that you want to make available to a huge

‘not-yet-R-using’ community, I would totally recommend checking out the developers hub and getting started! We are seeing

all sorts of cool power-analyses

Shiny apps, meta-analysis spreadsheets,

and meta-science tools like p-checker

that now live on websites all over the internet, but that could all find a good

home in jamovi. If you already have the R code, all you need to do is make it

available as a module!

If you use it, you can cite it as: Lakens, D. (in press). Equivalence tests: A practical primer for t-tests, correlations, and meta-analyses. Social Psychological and Personality Science. DOI: 10.1177/1948550617697177

If you use it, you can cite it as: Lakens, D. (in press). Equivalence tests: A practical primer for t-tests, correlations, and meta-analyses. Social Psychological and Personality Science. DOI: 10.1177/1948550617697177

Nice package (ahem). Thanks for providing that. I did find one small issue you might want to consider changing. When I used the sample Tooth Growth data set that comes with jamovi, the summary Equivalence Bounds table gave a raw difference that was negative, but the optional plot shows a positive difference. That also made the TOST results confusing, because it showed an Upper test that was significant while the Lower wasn't - the opposite of what the graph suggested. I did figure it out once I looked at the second table, but a consistent direction of the difference would make things easier on the user. Very helpful, though, in running those tests!

ReplyDeleteHello Daniël, since you know both JASP and Jamovi, which one would you recommend for introductory level stats courses? I'm looking for

ReplyDelete- a menu driven program (so no R or Python)

- that is beginner friendly & easy to use

- and offers (some) Bayesian analyses next to the classical ones.

I had set my mind on JASP and have even done two test courses with it. Students preferred it over SPSS, missed some features however. And now Jamovi comes along, thank you all very much :-( just kidding ;-) ;-)

Have you used Jamovi with students? Could you compare Jamovi and JASP? Thanks.

Hi, I don't know what was missing - I'd directly contact the developers of both packages and ask them if they have, or will have, what you need.

DeleteThanks you Daniël. What we were missing was:

Delete- edit data (solved in JASP as I understand; jamovi does it too)

- confidence intervals and credible regions not only expressed in Cohen's d, but also in absolute terms

- frequentist tools: enter expected values in the Chi-square test

- bayesian tools: be able to center the prior on a non-zero value.

I better install Jamovi,test it and compare it too JASP. And maybe contact the devs regarding missing stuff.

Thanks for the reply!

Hello Daniel. I'm brand new to equivalence testing and I have been doing my best to understand the theory to which I think I have. I'm trying to establish whether or not some independent samples are equivalent and I have 160 participants in each group; how will I determine which effect size to set my equivalence bounds?

ReplyDeleteHi, I explain how you can go about doing this in my paper: https://osf.io/preprints/psyarxiv/97gpc/

DeleteThank you Daniel!

DeleteFrom,

Moderately stressed thesis student. <3

Hey Daniel,

ReplyDeleteConducted a 2x4 Mixed ANOVA (2 condition's & 4 time points). Non-significant interaction so the conditions do not have a significant effect on the DV within the time points, however, I am looking to determine if the effects across conditions at each respective time point are equivalent and if all 4 are I am attempting to argue equivalence of the conditions on the DV.

So far I have conducted 4 t-tests, 1 for each time point comparing the conditions and found that each are statistically equivalent. I fear that I can't report them independently and conclude statistical equivalence. Do I need to apply a bonferroni correction? Is what I have done acceptable?

Thank you.

Hi,yes, a Holm-Bonferroni correction makes sense, unless this are 4 independent tests that test 4 independent theories, see http://daniellakens.blogspot.nl/2016/02/why-you-dont-need-to-adjust-you-alpha.html

DeleteHowever, since my t-tests are non-significant (e.g. p = .310) does applying the correction matter - because it is conservative when accepting the alternate hypothesis. Or does the correction effect my equivalence bounds? That would be a problem for me when attempting to claim equivalence.

DeleteHow do I cite this?

ReplyDeleteHi, you can cite the paper as: Lakens, D. (in press). Equivalence tests: A practical primer for t-tests, correlations, and meta-analyses. Social Psychological and Personality Science. DOI: 10.1177/1948550617697177 - thanks!

DeleteThanks for this article!

ReplyDeleteI have a question about this package. This package provides a way to conduct a equivalence test between two groups using a t-test. I was wondering, since my data has a non-parametric distribution, is it also possible to conduct a two-one-sided test using a Mann-Whitney U test?

I hope you can help me.

Kind regards,

I have never use Jamovi as a statistical tool but after reading this article on Equivalence testing in jamovi I have learned a lot and I will be downloading the program so that I can learn how to use it especially during my free time when I have finish offering Critical Literature Review Writing Help to college and university students who are writing their final year projects.

ReplyDeleteHello Daniel,

ReplyDeleteThis is a very nice blog post (as always). I have read the article, I am using Jamovi and Toster module, and I have played with the provided Excel sheet. Though I do not understand one thing. I would like to perform an equivalence test on two independent samples, where I do not know the ES to test for, but I want to use raw scores instead. Both the Jamovi module and the Excel sheet allow doing so. Let‘s say I want to know if the two samples differ (or are equal) on a 7-point scale where +/- 0.5 is the threshold I am interested in. I can specify the raw score as -0.5 and 0.5, but where can I specify the lenght of my scale? I suppose 0.5 point on a 5-point scale is different to 0.5 on 7- or 9- or 11-point scales. Or am I missing something?

I hope you can help me...